We at HackerEarth love pair programming. Before you call out for being biased though, hear us out. Over the years we have spent perfecting our interview platform FaceCode, we have heard from many hiring managers that using a pair programming interview tool is one of the best ways to assess a candidate’s coding abilities in real time.

Let’s look at what these managers have told us:

- With modern pair programming interview tools, employers must be well-informed about the coders’ unique skills set, ability to collaborate, solve problems, and strong analytical thinking

- Interviewers must be able to deduce the coders’ agility in coding, the complexity of the code used, proficiency in using features such as CodeEditor, auto-suggest, and much more

- A modern interview approach must evaluate how well coders handle ambiguity. It must highlight their attitude toward the challenge and aptitude for learning

- The interviewer learns about the interviewee’s skills and personality, while the interviewee learns about whom they will be working with and what a typical workday looks like

What is pair programming?

Pair programming is a collaborative coding technique where two programmers work together at one workstation. One, the “driver,” writes code while the other, the “navigator,” reviews each line of code as it is typed in. The roles switch frequently to keep both partners engaged. This approach not only improves code quality by facilitating immediate feedback and error correction but also enhances learning and knowledge sharing between the pair. It’s particularly effective in tackling complex problems and learning new technologies. Companies often use pair programming to foster a collaborative environment and develop a more cohesive team dynamic, ultimately leading to more robust and error-free software.

What is a pair programming interview?

A pair programming interview is a style of interviewing candidates where the interviewer and candidate share a coding platform to solve a programming problem together. With pair programming, you can test 3 skills in developers: problem-solving, teamwork, and communication skills.

It can be a great way to identify talented developers. That’s not to say pair programming interviews (a.k.a pair coding interviews) can not go wrong.

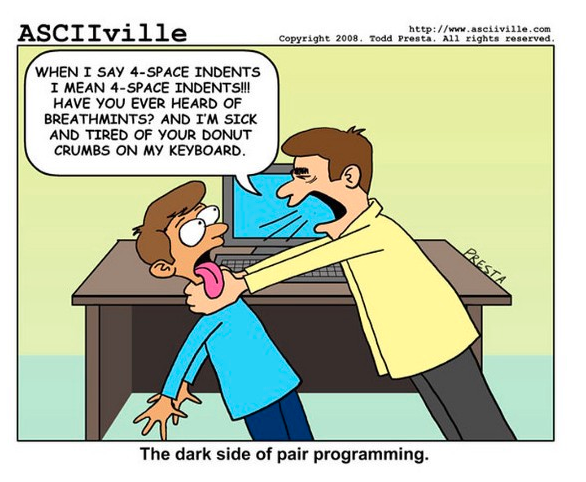

DISCLAIMER: No known coders have been harmed during pair programming interviews. Also, breath mints are just good to carry along for any scenario. Just saying.

***

Laughs aside, the main reason why pair programming interviews go south is that the rules of engagement are not specified; or followed. As a wise man said, a football game without rules is just a brawl. So, let’s list down some of the oft-repeated mistakes in a pair coding interview and how one can avoid them.

Pair Programming Interview Mistakes

Mistake 1: Not agreeing on rules beforehand

Pair programming has a simple structure. There’s a DRIVER and a NAVIGATOR. Simply put, the driver writes code while the other, the observer or navigator, reviews each line of code as it is typed in.

There are many ways this driver-navigator relationship can work:

- Ping-Pong pairing: In this Developer A starts the process by writing a failing test or the ‘PING’. Developer B then writes the implementation to make it pass i.e. the ‘PONG’. Each set is then followed by refactoring the code together.

- Strong-style pairing: In this, the navigator is usually the person who has more experience with the setup or task at hand, while the driver has lesser experience (with the language, the tool, the codebase, or because they are fresh out of college). The experienced person usually ends up being in the navigator role and guides the driver.

- Pair development: Pair development is not a ‘style’ of pair programming per se. It’s more of a methodology. While the above two styles can be used for developing code in real time, pair development can be used to create a user story or feature. This goes beyond just coding and allows the pair to handle many different tasks as a team.

So, before you invite a candidate over for a code pair interview, ensure you know which style you are going to use and lay down the rules clearly. If you are switching roles between driver and navigator, make sure that the rules of discussion and expectations are clear from the get-go.

Mistake 2: Lack of proper conflict resolution mechanisms

It is important to settle conflicts well as a pair, and one way of doing it is to agree at the outset on which role has the final say. Between the driver and the navigator, one role needs to have the ‘casting vote’.

That said, this mechanism should not deter either of the pair from asking questions, or raising red flags. The goal of the pair programming role is to provide the candidate with something close to a ‘real-world experience’, i.e. they work on actual problems that your team solves in their workday. At the same time, the interviewer gets a first-hand glimpse at the candidate’s problem solving skills, and ability to collaborate.

Don’t forget this in an attempt to be ‘right’ during your pair programming routine. Agreeing to a mutually suitable arrangement at the outset aligns expectations and provides a fairly straightforward method of conflict resolution.

Mistake 3: Thinking there is just one ‘right’ answer

There are 11287398173 ways to write FizzBuzz. Remember this when you are in the middle of your next pair programming interview.

As interviewers, a very easy mistake to make is to believe that there is just one right way to approach a problem. Experienced hiring managers know that while it is perfectly alright to usually have an answer in mind to a given question, it is also important to listen and see what the interviewee’s answer is.

Most of the time, you’ll find that the candidate’s approach is different from yours. If you keep an open mind, you might even be surprised by their creativity! Rigidity in thought is a no-no for any interviewer; this typically demonstrates that they are not open to new ideas and only serves to alienate candidates.

This is also important for interviewees. Many times, candidates get trapped in the rabbit hole of ‘pleasing’ the interviewer. They look for solutions that they think will appease the interviewer. It is important to be aware of this behavior. Use the opportunity to showcase your skill-set, instead of behaving like a mind reader and trying to say and do things that will impress the manager. Ask clarifying questions, understand the boundary conditions or the corner cases, and then do your own thing!

Mistake 4: Not communicating enough

Okay, we get it. Not everyone likes chatter when they are coding. Some coders like music, others like radio silence.

The whole purpose of a pair-programming interview is to communicate. Let’s rephrase that a bit. The sole purpose of a pair-programming interview is to communicate effectively with your partner and build something collaboratively.

Interviewers need to set the tone here. Please tell your candidates clearly what kind of communication you expect from them. Do you want them to finish their coding and then walk you through their code, or do you want a play-by-play commentary? While doing so, please be cognizant of the fact that you do not come across as intimidating, and allow the candidate the flexibility to understand and solve the problem in their own time and space.

Interviewees would do better to ditch the YOLO approach on this one and use the session to show their planning and communication skills.

Benefits of pair programming interviews

Pair programming interviews offer a number of benefits to both employers and candidates.

Benefits for employers:

Assess real-time problem-solving skills: Pair programming interviews allow employers to see how candidates approach and solve problems in a real-time setting. This is much more informative than traditional whiteboard interviews, which can be more artificial and less indicative of a candidate’s actual coding skills.

Evaluate communication and teamwork skills: Pair programming interviews also allow employers to evaluate candidates’ communication and teamwork skills. This is important because tech workers often need to be able to work effectively with others on complex projects.

Identify potential culture fits: Pair programming interviews can also help employers to identify potential culture fits. By observing how candidates interact with each other and with the interviewer, employers can get a better sense of whether candidates would be a good fit for the company culture.

Benefits for candidates:

More skill-oriented process: Pair programming interviews give candidates a more realistic opportunity to demonstrate their skills. Candidates are able to work with the interviewer to solve a problem, and they are able to ask questions and get feedback as they go. This can help candidates to perform better than they might in a traditional whiteboard interview.

Better understanding of the company culture: Pair programming interviews also give candidates a better understanding of the company culture. By interacting with the interviewer and seeing how the interviewer works, candidates can get a sense of how the company values collaboration and teamwork.

Opportunity to network with potential colleagues: Pair programming interviews can also be an opportunity for candidates to network with potential colleagues. By working with the interviewer, candidates can learn more about the company’s projects and technologies. Candidates can also make a good impression on the interviewer and other potential colleagues.

Tips to conduct a pair programming interview

Ensuring your pair programming interviews are effective requires a balanced approach:

Set clear expectations: Before the session, clearly communicate the objectives, tools to be used, and the problem’s scope.

Use real-world scenarios: Instead of abstract problems, use challenges that reflect real tasks your team faces. This provides valuable insights into the candidate’s practical skills.

Ensure role clarity: Specify who is the “driver” (the one writing the code) and the “observer” (the one reviewing and suggesting) and switch roles midway to ensure a balanced assessment.

Prepare a list of pair programming interview questions: Create a list of pair programming interview questions to check the candidate’s ability to design code

Maintain respectful communication: Encourage open dialogue. The candidate should feel comfortable asking questions, suggesting alternatives, or admitting when they don’t know something.

Embrace silence: Allow the candidate to think. Not every moment needs to be filled with talk.

Provide tools and documentation: Ensure the candidate has access to necessary tools and can refer to documentation if needed. This mirrors real-world conditions.

Focus on the Journey, NOT just the Solution: Remember, the goal is to understand how the candidate thinks and collaborates. A perfect solution isn’t the only indicator of a good fit.

Conclude with feedback: Dedicate the last few minutes to provide feedback. Highlight what went well and areas of improvement. This can be incredibly valuable for both the candidate and your company’s reputation.

When done right, pair programming can yield awesome results!

These are just some of the things we have learned from our discussions with hiring managers and candidates. We hope that they help you in your next interview. Another important aspect of a good pair programming interview is using the right tool, and HackerEarth’s FaceCode can help you with that. The key to having a good technical code pair interview is creating a familiar environment for the candidates, so they can relax and focus on the task at hand. FaceCode, with its built-in code editor and easy-to-access question library, allows you to do that easily.

We hope you ace your next pair programming interview – whether you are an interviewer or a candidate. Good luck!